-

Prospective Students

-

Faculty and Staff

-

Current Students

-

Alumni and Friends

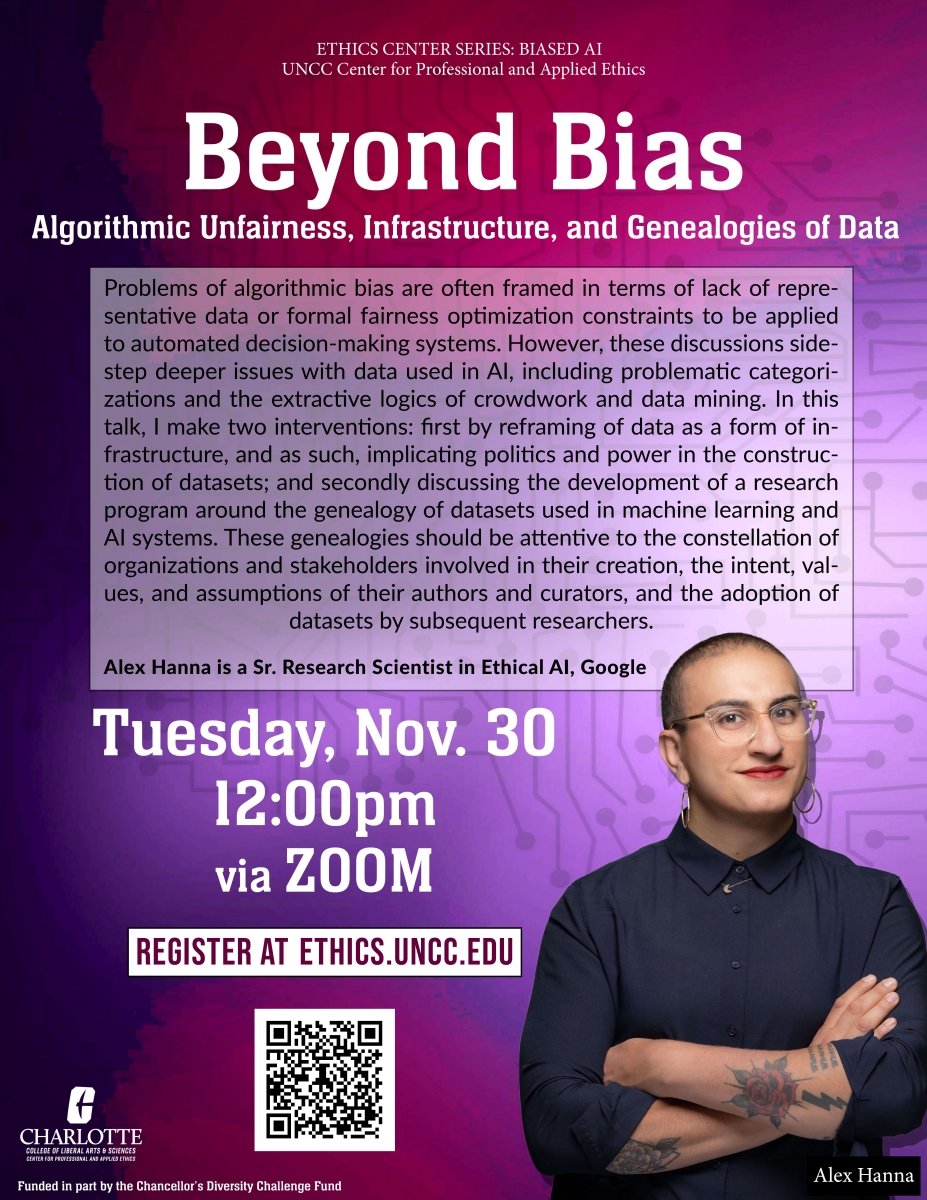

Nov 30, 12:00 ET: Alex Hanna (Sr. Research Scientist in Ethical AI, Google) presents as part of our series on Bias in AI, on “Beyond Bias: Algorithmic Unfairness, Infrastructure, and Genealogies of Data”

--> Zoom Registration: https://uncc.zoom.us/meeting/register/tJIoceGsqjsjG9BBxUSxl4Yo0XsMk1iPp_7g

Abstract: Problems of algorithmic bias are often framed in terms of lack of representative data or formal fairness optimization constraints to be applied to automated decision-making systems. However, these discussions sidestep deeper issues with data used in AI, including problematic categorizations and the extractive logics of crowdwork and data mining. In this talk, I make two interventions: first by reframing of data as a form of infrastructure, and as such, implicating politics and power in the construction of datasets; and secondly discussing the development of a research program around the genealogy of datasets used in machine learning and AI systems. These genealogies should be attentive to the constellation of organizations and stakeholders involved in their creation, the intent, values, and assumptions of their authors and curators, and the adoption of datasets by subsequent researchers.:

About the series: Artificial Intelligence (AI) systems are poised to offer potentially revolutionary changes to fields as diverse as healthcare and traffic systems. However, there is a growing concern both that deployment of AI systems is increasing social power asymmetries and that ethical attention to those asymmetries requires going beyond technical solutions and incorporating research on unequal social structures. Because AI systems are embedded in social systems, technical solutions to bias need to be contextualized in their interaction with those larger systems. This series explores problems and solutions in making AI more just. The first talk is by Ben Green on Oct. 5, and future speakers include Alex Hanna. Talks will be archived on the Center's YouTube Channel.